Breaking the Bank: Weakness in Financial AI Applications

Currently, threat actors possess limited access to the technology required to conduct disruptive operations against financial artificial intelligence (AI) systems and the risk of this targeting type remains low. However, there is a high risk of threat actors leveraging AI as part of disinformation campaigns to cause financial panic. As AI financial tools become more commonplace, adversarial methods to exploit these tools will also become more available, and operations targeting the financial industry will be increasingly likely in the future.

AI Compounds Both Efficiency and Risk

Financial entities increasingly rely on AI-enabled applications to streamline daily operations, assess client risk, and detect insider trading. However, researchers have demonstrated how exploiting vulnerabilities in certain AI models can adversely affect the final performance of a system. Cyber threat actors can potentially leverage these weaknesses for financial disruption or economic gain in the future.

Recent advances in adversarial AI research highlights the vulnerabilities in some AI techniques used by the financial sector. Data poisoning attacks, or manipulating a model's training data, can affect the end performance of a system by leading the model to generate inaccurate outputs or assessments. Manipulating the data used to train a model can be particularly powerful if it remains undetected, since "finished" models are often trusted implicitly. It should be noted that adversarial AI research demonstrates how anomalies in a model do not necessarily point users toward a wrong answer, but redirect users away from the more correct output. Additionally some cases of compromise require threat actors to obtain a copy of the model itself, through reverse engineering or compromising the machine learning pipeline of the target. The following are some vulnerabilities that assume this white-box knowledge of the models under attack:

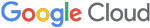

- Classifiers are used for detection and identification, such as object recognition in driverless cars and malware detection in networks. Researchers have demonstrated how these classifiers can be susceptible to evasion, meaning objects can be misclassified due to inherent weaknesses in the mode (Figure 1).

- Researchers have highlighted how data poisoning can influence the outputs of AI recommendation systems. By changing reward pathways, adversaries can make a model suggest a suboptimal output such as reckless trades resulting in substantial financial losses. Additionally, groups have demonstrated a data-poisoning attack where attackers did not have control over how the training data was labeled.

- Natural language processing applications can analyze text and generate a basic understanding of the opinions expressed, also known as sentiment analysis. Recent papers highlight how users can input corrupt text training examples into sentiment analysis models to degrade the model's overall performance and guide it to misunderstand a body of text.

- Compromises can also occur when the threat actor has limited access and understanding of the model’s inner-workings. Researchers have demonstrated how open access to the prediction functions of a model as well as knowledge transfer can also facilitate compromise.

How Financial Entities Leverage AI

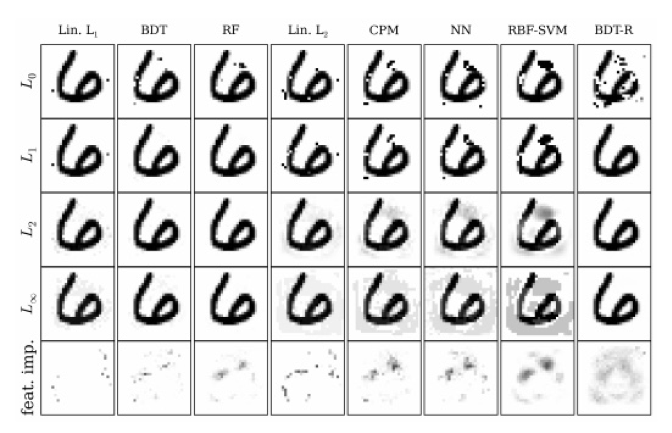

AI can process large amounts of information very quickly, and financial institutions are adopting AI-enabled tools to make accurate risk assessments and streamline daily operations. As a result, threat actors likely view financial service AI tools as an attractive target to facilitate economic gain or financial instability (Figure 2).

Sentiment Analysis

Use

Branding and reputation are variables that help analysts plan future trade activity and examine potential risks associated with a business. News and online discussions offer a wealth of resources to examine public sentiment. AI techniques, such as natural language processing, can help analysts quickly identify public discussions referencing a business and examine the sentiment of these conversations to inform trades or help assess the risks associated with a firm.

Potential Exploitation

Threat actors can potentially insert fraudulent data that could generate erroneous analyses regarding a publicly traded firm. For example, threat actors could distribute false negative information about a company that could have adverse effects on a business' future trade activity or lead to a damaging risk assessment. Manipulating the data used to train a model can be particularly powerful if it remains undetected, since "finished" models are often trusted implicitly.

Threat Actors Using Disinformation to Cause Financial Panic

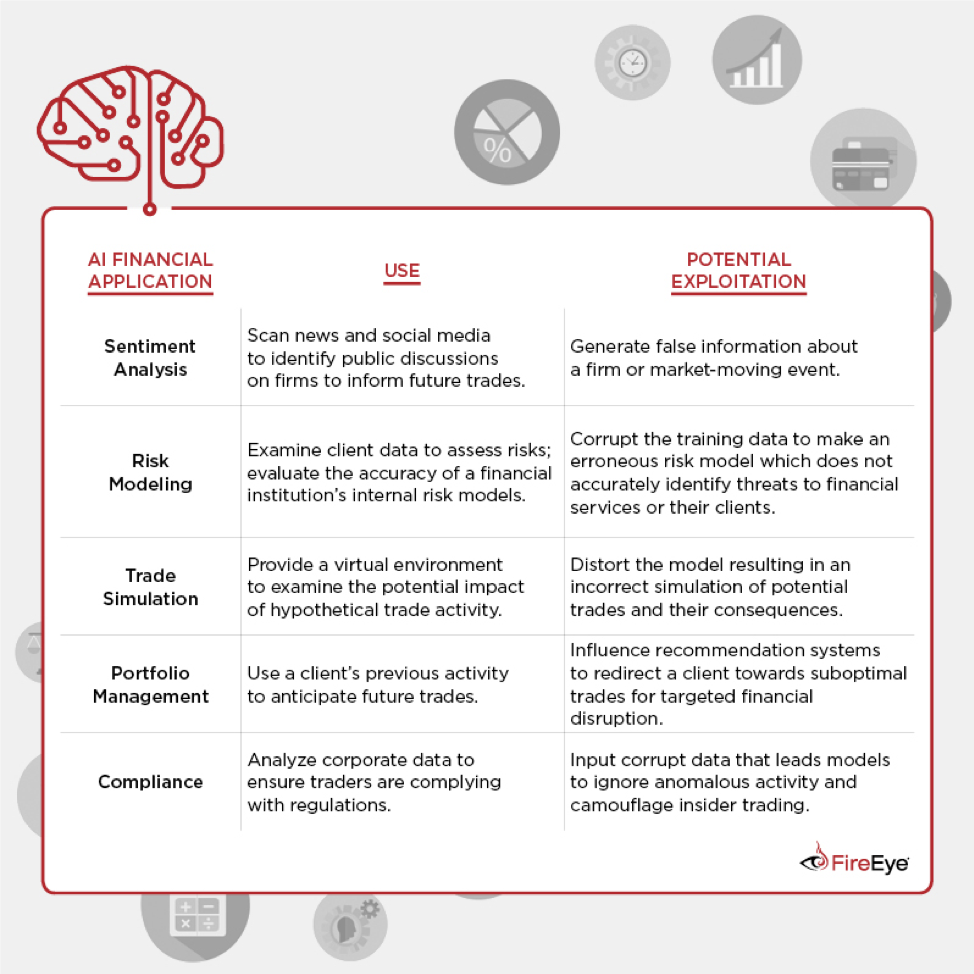

FireEye assess with high confidence that there is a high risk of threat actors spreading false information that triggers AI enabled trading and causes financial panic. Additionally, threat actors can leverage AI techniques to generate manipulated multimedia or "deep fakes" to facilitate such disruption.

False information can have considerable market-wide effects. Malicious actors have a history of distributing false information to facilitate financial instability. For example, in April 2013, the Syrian Electronic Army (SEA) compromised the Associated Press (AP) Twitter account and announced that the White House was attacked and President Obama sustained injuries. After the false information was posted, stock prices plummeted.

- Malicious actors distributed false messaging that triggered bank runs in Bulgaria and Kazakhstan in 2014. In two separate incidents, criminals sent emails, text messages, and social media posts suggesting bank deposits were not secure, causing customers to withdraw their savings en masse.

- Threat actors can use AI to create manipulated multimedia videos or "deep fakes" to spread false information about a firm or market-moving event. Threat actors can also use AI applications to replicate the voice of a company's leadership to conduct fraudulent trades for financial gain.

- We have observed one example where a manipulated video likely impacted the outcome of a political campaign.

Portfolio Management

Use

Several financial institutions are employing AI applications to select stocks for investment funds, or in the case of AI-based hedge funds, automatically conduct trades to maximize profits. Financial institutions can also leverage AI applications to help customize a client's trade portfolio. AI applications can analyze a client's previous trade activity and propose future trades analogous to those already found in a client's portfolio.

Potential Exploitation

Actors could influence recommendation systems to redirect a hedge fund toward irreversible bad trades, causing the company to lose money (e.g., flooding the market with trades that can confuse the recommendation system and cause the system to start trading in a way that damages the company).

Moreover, many of the automated trading tools used by hedge funds operate without human supervision and conduct trade activity that directly affects the market. This lack of oversight could leave future automated applications more vulnerable to exploitation as there is no human in the loop to detect anomalous threat activity.

Threat Actors Conducting Suboptimal Trades

We assess with moderate confidence that manipulating trade recommendation systems poses a moderate risk to AI-based portfolio managers.

- The diminished human involvement with trade recommendation systems coupled with the irreversibility of trade activity suggest that adverse recommendations could quickly escalate to a large-scale impact.

- Additionally, operators can influence recommendation systems without access to sophisticated AI technologies; instead, using knowledge of the market and mass trades to degrade the application's performance.

- We have previously observed malicious actors targeting trading platforms and exchanges, as well as compromising bank networks to conduct manipulated trades.

- Both state-sponsored and financially motivated actors have incentives to exploit automated trading tools to generate profit, destabilize markets, or weaken foreign currencies.

- Russian hackers reportedly leveraged Corkow malware to place $500M worth of trades at non-market rates, briefly destabilizing the dollar-ruble exchange rate in February 2015. Future criminal operations can leverage vulnerabilities in automatic training algorithms to disrupt the market with a flood of automated bad trades.

Compliance and Fraud Detection

Use

Financial institutions and regulators are leveraging AI-enabled anomaly detection tools to ensure that traders are not engaging in illegal activity. These tools can examine trade activity, internal communications, and other employee data to ensure that workers are not capitalizing on advanced knowledge of the market to engage in fraud, theft, insider trading, or embezzlement.

Potential Exploitation

Sophisticated threat actors can exploit the weaknesses in classifiers to alter an AI-based detection tool and mischaracterize anomalous illegal activity as normal activity. Manipulating the model helps insider threats conduct criminal activity without fear of discovery.

Threat Actors Camouflaging Insider Threat Activity

Currently threat actors possess limited access to the kind of technology required to evade these fraud detection systems, and therefore with high confidence we assess that the threat of this activity type remains low. However, as AI financial tools become more commonplace, adversarial methods to exploit these tools will also become more available and insider threats leveraging AI to evade detection will likely increase in the future.

Underground forums and social media posts demonstrate there is a market for individuals with insider access to financial institutions. Insider threats could exploit weaknesses in AI-based anomaly detectors to camouflage nefarious activity, such as external communications, erratic trades, and data transfers, as normal activity.

Trade Simulation

Use

Financial entities can use AI tools that leverage historical data from previous trade activity to simulate trades and examine their effects. Quant-fund managers and high-speed traders can use this capability to strategically plan future activity, such as the optimal time of the day to trade. Additionally, financial insurance underwriters can use these tools to observe the impact of market-moving activity and generate better risk assessments.

Potential Exploitation

By exploiting inherent weaknesses in an AI model, threat actors could lull a company into a false sense of security regarding the way a trade will play out. Specifically, threat actors could find out when a company is training their model and inject corrupt data into a dataset being used to train the model. Subsequently, the end application generates an incorrect simulation of potential trades and their consequences. These models are regularly trained on the latest financial information to improve a simulation's performance, providing threat actors with multiple opportunities for data poisoning attacks. Additionally, some high-speed traders speculate that threats could flood the market with fake sell orders to confuse trading algorithms and potentially cause the market to crash.

FireEye Threat Intelligence has previously examined how financially motivated actors can leverage data manipulation for profit through pump and dump scams and stock manipulation.

Threat Actors Conducting Insider Trading

FireEye assesses with moderate confidence that the current risk of threat actors leveraging these attacks is low as exploitations of trade simulations require sophisticated technology as well as additional insider intelligence regarding when a financial company is training their model. Despite these limitations, as financial AI tools become more popular, adversarial methods to exploit these tools are also likely to become more commonplace on underground forums and via state-sponsored threats. Future financially motivated operations could monitor or manipulate trade simulation tools as another means of gaining advanced knowledge of upcoming market activity.

Risk Assessment and Modeling

Use

AI can help the financial insurance sector's underwriting process by examining client data and highlighting features that it considers vulnerable prior to market-moving actions (joint ventures, mergers & acquisitions, research & development breakthroughs, etc.). Creating an accurate insurance policy ahead of market catalysts requires a risk assessment to highlight a client's potential weaknesses.

Financial services can also employ AI applications to improve their risk models. Advances in generative adversarial networks can help risk management by stress-testing a firm's internal risk model to evaluate performance or highlight potential vulnerabilities in a firm's model.

Potential Exploitation

If a country is conducting market-moving events with a foreign business, state-sponsored espionage actors could use data poisoning attacks to cause AI models to over or underestimate the value or risk associated with a firm to gain a competitive advantage ahead of planned trade activity. For example, espionage actors could feasibly use this knowledge and help turn a joint venture into a hostile takeover or eliminate a competitor in a bidding process. Additionally, threat actors can exploit weaknesses in financial AI tools as part of larger third-party compromises against high-value clients.

Threat Actors Influencing Trade Deals and Negotiations

With high confidence, we consider the current threat risk to trade activity and business deals to be low, but as more companies leverage AI applications to help prepare for market-moving catalysts, these applications will likely become an attack surface for future espionage operations.

In the past, state-sponsored actors have employed espionage operations during collaborations with foreign companies to ensure favorable business deals. Future state-sponsored espionage activity could leverage weaknesses in financial modeling tools to help nations gain a competitive advantage.

Outlook and Implications

Businesses adopting AI applications should be aware of the risks and vulnerabilities introduced with these technologies, as well as the potential benefits. It should be noted that AI models are not static; they are routinely updated with new information to make them more accurate. This constant model training frequently leaves them vulnerable to manipulation. Companies should remain vigilant and regularly audit their training data to eliminate poisoned inputs. Additionally, where applicable, AI applications should incorporate human supervision to ensure that erroneous outputs or recommendations do not automatically result in financial disruption.

AI's inherent limitations also pose a problem as the financial sector increasingly adopts these applications for their operations. The lack of transparency in how a model arrived at its answer is problematic for analysts who are using AI recommendations to conduct trades. Without an explanation for its output, it is difficult to determine liability when a trade has negative outcomes. This lack of clarity can lead analysts to mistrust an application and eventually refrain from using it altogether. Additionally, the rise of data privacy laws may also accelerate the need for explainable AI in the financial sector. Europe's General Data Protection Regulation (GDPR) stipulates that companies employing AI applications must have an explanation for decisions made by its models.

Some financial institutions have begun addressing this explainability problem by developing AI models that are inherently more transparent. Researchers have also developed self-explaining neural networks, which provide understandable explanations for the outputs generated by the system.